One of my aims for this year has been to attend/talk at what I will class for the purposes of this blog as ‘non-testing’ events, primarily to speak about what on earth testing is and how we can lampoon the myths and legends around it. It gets some really interesting reactions from professionals within other disciplines.

And usually those reactions (much like this particular blog), leave me with more questions than answers!

Huh?

After speaking at a recent event, I was asked an interesting question by an attendee. This guy was great, he reinvented himself every few years into a new part of technology, his current focus, machine learning. His previous life, ‘Big Data’, more on that later. Anyway, he said (something like):

‘I enjoyed your talk but I think testing as an information provider doesn’t go far enough. If they aren’t actionable insights, then what’s the point?’

This is why I like ‘non-testing’ events, someone challenging a tenet than has been left underchallenged in the testing circles I float around in. So, I dug a little deeper asked what was behind that assertion:

‘Well, what use is information without insight, the kind you can do something about. Its getting to the point where there is so much information, providing more doesn’t cut it.’

Really?

On further, further investigation I found he was using the term ‘actionable insight’ in his previous context within the realm of ‘Big Data.’ For example, gathering data via Google Analytics on session durations and customer journeys. Lots of information, but without insight, probably of dubious usefulness, without analysis including other axis such as time.

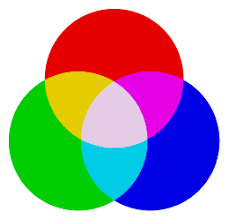

There is an associated model for thinking on the subject of ‘actionable insights’ namely a pyramid. It is based on the Data Information Knowledge Wisdom Pyramid (7). We love our pyramids, other shaped models for thinking are available apparently. There is the odd cone in the literature too.

I also enjoyed the heuristics of an actionable insights with the Forbes article (3):

If the story of your testing includes elements of the above, it would likely end up quite compelling. It strikes me that an actionable insight is a fundamentally context driven entity, it takes into account the wider picture of the situation while being clear and specific. If my testing can gather insights which satisfy the above, I believe my stakeholders would be very satisfied indeed. Maybe you could argue that you are already producing insights of this calibre but you call it information. Good for you if you are.

So?

What immediately set my testing sensibilities on edge from the conversation and subsequent investigation, was implying that testing would produce insights and imply that actions should be taken (1), which takes us into a grey area. After all what do we ‘know’ as testers? Only what we have observed, through our specific lenses and biases. The person who questioned me at my talk, believed that was a position of ‘comfort but not of usefulness.’ More food for thought.

Moreover, those involved with testing are continuously asked:

‘What would you do with this information you have found?’

I’ve been asked this more times than I can remember. Maybe it is time that we should be considering ‘actionable insights’, if this question is going to persist, better chance of a coherent answer. Otherwise the information gleaned from testing might be just another information source drowning in an ever increasing pool of information, fed by a deepening well of data.

Moreoverover, it showed the real value of getting out into the development community, questions that make you question that which you have accepted for a long, long time.

Reading

- http://whatis.techtarget.com/definition/actionable-intelligence

- https://www.techopedia.com/definition/31721/actionable-insight

- http://www.forbes.com/sites/brentdykes/2016/04/26/actionable-insights-the-missing-link-between-data-and-business-value/#5293082965bb

- https://www.gapminder.org/videos/will-saving-poor-children-lead-to-overpopulation/

- http://www.slideshare.net/Medullan/finding-actionable-insights-from-healthcares-big-data

- http://www.perfbytes.com/manifesto

- https://en.wikipedia.org/wiki/DIKW_Pyramid

- http://www.slideshare.net/wealthengineinstitute/building-strategy-using-dataderived-insights-major-gifts/3-Building_Strategy_Using_Data_and